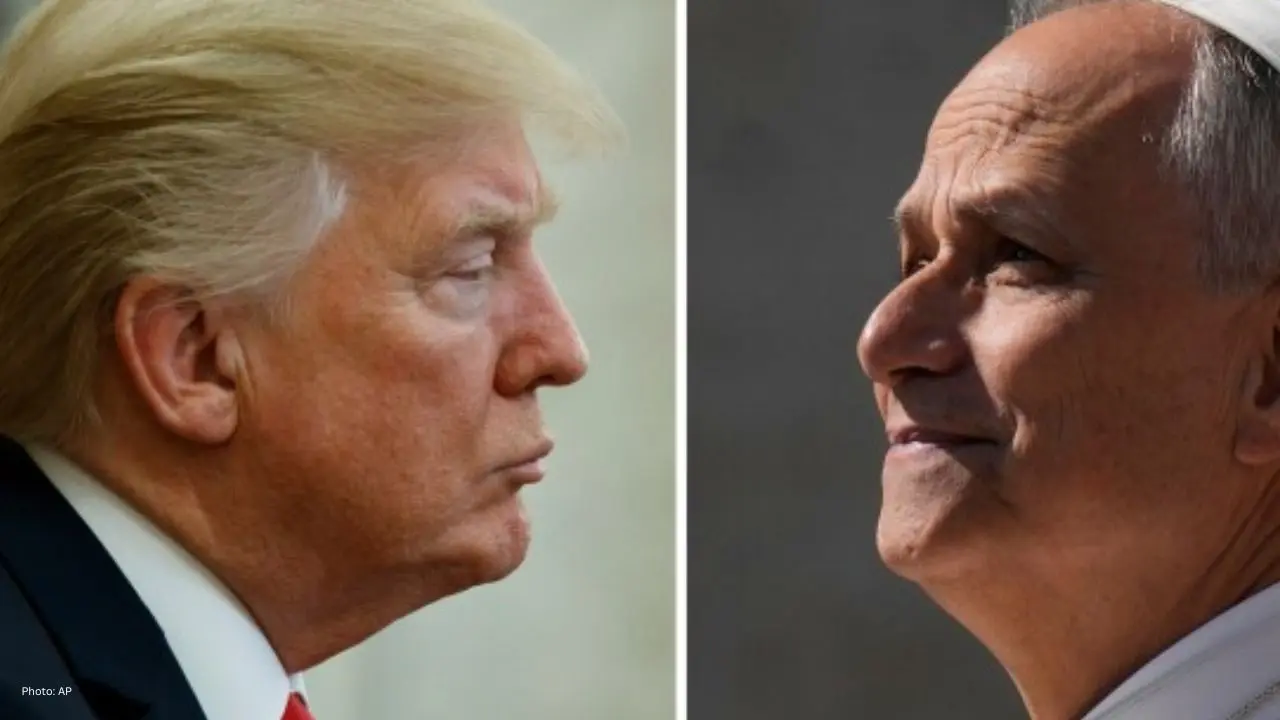

Trump Says Iran War Close to Ending Amid Fresh Tal

Donald Trump says the Iran conflict is close to ending, as the U.S. pushes for a deal while Vice Pre

AI technologies have proliferated quickly in recent years, often outpacing regulatory frameworks. This rapid expansion has enabled companies to launch powerful AI systems in consumer products without comprehensive guidelines. However, as AI technologies evolve—enabling decision-making and personal data analysis—global concerns have intensified.

Governments have been grappling with whether AI should be classified as a consumer good, a public utility, or a potential national-security threat. As the urgency for regulatory intervention grows, a consensus is forming.

Experts argue we are entering the regulated era of AI, where safety, accountability, and transparency will lead development. Instead of letting AI systems grow unchecked, these forthcoming laws are set to outline defined ethical boundaries for their usage.

The implications will not be limited to large companies; individual lives will be impacted across homes, workplaces, and schools.

Understanding these regulations requires appreciating how extensively AI is woven into our everyday lives.

From email applications predicting your next words to instant chatbot responses, AI is at work continuously. Even basic spell-checkers rely on machine learning algorithms refined by vast sentence datasets.

Streaming and music services utilize sophisticated recommendation algorithms, adapting to your interactions—what you play, skip, or save.

Fraud alerts, tailored spending insights, and risk assessments in loans predominantly depend on AI-driven analytics.

GPS systems, ride-sharing, and delivery apps utilize machine learning for traffic predictions, routing, and arrival estimates.

E-commerce platforms analyze user habits to personalize recommendations, predict shopping trends, and customize user experiences.

From facial recognition to battery management, AI enhancements are prevalent in mobile devices.

As a result of these integrations, even minor shifts in AI regulations could have sweeping effects on daily life.

AI technologies learn through user data analysis—covering phone usage, typing behavior, and preferences. Regulators believe users should know how their data is managed.

AI systems can unintentionally reflect bias present in training data, leading to unequal treatment. New regulations aim to uphold fairness.

Advanced AI systems can make incorrect predictions that lead to real repercussions. Enforcement of rigorous testing prior to deployment is sought by regulators.

If an AI system instigates harm, determining the responsible party—be it the developer, manufacturer, or user—is complex. New safety laws aim to clarify liability.

Sophisticated AI can be misapplied for harmful intents like misinformation or cyberattacks, prompting stricter regulations to mitigate such risks.

These discussions have direct implications for everyday AI tools.

Here's a closer look at how various daily AI tools may adjust:

Messaging applications might need to clearly label AI-generated responses or suggestions, ensuring users recognize AI involvement.

Clearer settings regarding data storage and analysis could be mandated, prompting user consent before activating AI features.

Restrictions on data types could result in predictive text becoming less personalized and accurate.

AI will face restrictions in analyzing sensitive attributes, leading to a more generalized social media feed.

AI-enhanced posts or modifications may need clear indications, affecting a variety of media content.

Platforms may need to implement verifiable systems to ensure minors are protected from harmful content.

Banks might have to provide detailed explanations for AI-driven loan decisions, ensuring transparency.

AI systems will undergo rigorous checks, which could impact the speed of fraud detection.

If limitations are introduced regarding data collection, banks could reduce personalized offerings.

Regulations might enforce cautious routing through mapping applications, impacting travel estimates.

Ride-sharing costs may become subject to increased scrutiny regarding the rationale behind fare changes.

Users may receive detailed reports on how their location data is utilized.

As regulations become stricter, personalized shopping suggestions may start feeling broader.

Retailers might have to explain price variability across different consumers.

Platforms may need to vet AI-generated reviews to ensure authenticity.

In light of privacy concerns, voice assistants might transition from cloud to device-based data processing.

Voice recognition tools may need to inform users when AI processes their commands.

Always-on capabilities may face limitations to reduce data collection.

AI-generated visuals may require clear watermarks for authentication purposes.

There may be restrictions to prevent the creation of harmful or misleading AI content.

Organizations may need to disclose the data sets their AI models are trained on for clearer understanding.

Offices using AI software for productivity assessment may face regulations limiting intrusive tracking.

Hiring platforms may be prevented from assessing candidates based on sensitive traits during interviews.

AI-driven decision-making may necessitate human oversight to ensure equity.

Users will notice labels and consent notices as AI becomes less transparent.

Enhanced safety checks could delay updates or limit functionalities until they meet compliance standards.

Stricter regulations may enhance trust in AI tools while compromising on personalization.

Users will gain control over privacy settings and data usage for the first time.

Expect new options on apps once these laws take effect.

Features may require explicit consent, so understanding these will help in making informed choices.

Expect features to evolve or temporarily disappear to align with new compliance requirements.

These alerts will help you grasp when AI affects your interactions.

Expect stronger identity checks and heightened safety protocols.

While AI is set to become safer and more transparent, the pace of innovation may slow as compliance takes precedence. What remains certain is the continued influence of AI on our lives; these new regulations aim to enhance overall security.

The most perceptible change for users will be heightened awareness. Previously unnoticed AI functions will be made evident, with clear labelling and greater control. The era of “silent AI” is concluding, paving the way for a more responsible AI landscape.

This article is intended for informational and educational purposes only and does not constitute legal, financial, or professional advice. AI regulations may evolve and vary by jurisdiction.